Artificial Intelligence and the Politics of War

The Delusions of the AI Revolution Part I

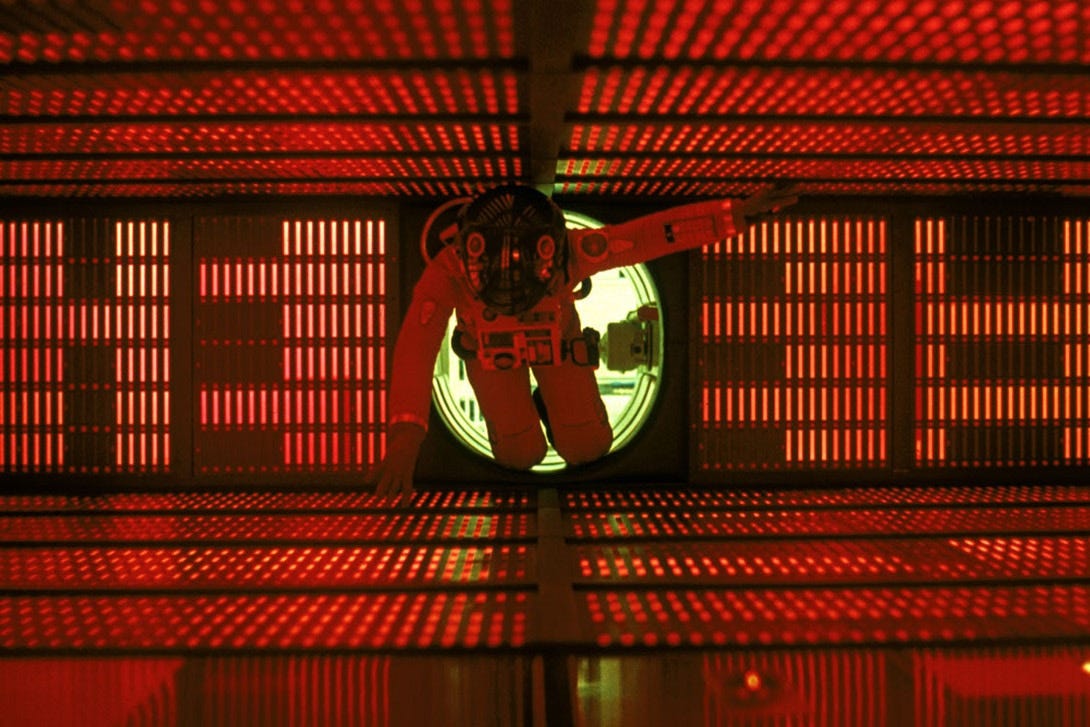

2001: A Space Odyssey

Thanks to some tech bros in Silicon Valley, much is being made of what will happen if (and probably when) AI is integrated into military decision-making. Some of the more enthusiastic proponents like Palmer Lucky have made sweeping claims that AI will eliminate the fog of war and friction that make up armed conflict.

In their minds, large language models (LLM) will be able to rapidly synthesize vast sums of information to the extent that human decision-making during a conflict will be rendered essentially irrelevant. To use Palmer Lucky’s words, with the help of AI “you just can’t be wrong…what you see in China will have been run in a simulation… long before it ever happens.”

While there’s a range of opinions out there on the eventual battlefield efficacy of AI in terms of how the next war will be fought, this isn’t exactly an uncommon position in the tech enthusiast space, so it’s worth pointing out why it so fundamentally misunderstands war.

Now to start, none of this is new. Going back to the 1990s when the DoD was obsessed with the “Revolution in Military Affairs,” the U.S. military has had a long-term love affair with the notion that technology could eliminate fundamental aspects of warfare.

To put it extremely briefly, the RMA was defined by the belief that the systemic integration of contemporary technology into the military would enable a form of information dominance to the extent that nothing on the battlefield would be unknown.

It was the sort of thing where people believed the vertical and horizontal integration of communications and sensors throughout the force would mean that from your private to the Pentagon, everyone would know exactly what was happening as it happened.

These ideas were fairly widespread across the force, but their loudest proponents came from the USAF, with John A. Warden III’s book The Air Campaign being among the most prominent examples.

He lays out what is essentially a manifesto wherein the technological advancements of the 1980s and 1990s had led to what is now commonly referred to as “systems warfare.” The way to win a contemporary war was to engage in overwhelming and rapid destruction of all key nodes of communications in an adversary’s military to render him essentially blind, deaf, and dumb.

The logic of this was that the systematic destruction of the key nodes of an adversary’s military hierarchy would compel them to admit a timely defeat. The USAF of course was the branch most well positioned to do this, and the Gulf War was seen as the template for this form of warfare.

Putting aside the fact that Saddam’s military in 1991 was sort of the perfect enemy for the military the United States had built since Vietnam—a highly rigid force with poor morale, and a total inability to contest our airpower—there’s a lot from this theory that has been vindicated in terms of the changing character of war.

It is of course obvious that the widespread integration of C4ISR across the force has made the United States military more deadly, precision fires have let us go after essentially anyone we can see on the battlefield, and the focus on systematically destroying the ability for an adversary to communicate is a tremendous joint-force contribution that allows for the rapid exploitation of confused enemy forces.

However—and this is what I wanted to get to here—, it never actually changed what war itself is. It is the classic refrain from anyone who has read too much Clausewitz that the character of warfare changes but its nature doesn’t. The mistake that the RMA crowd made in the 1990s, and the AI tech-bro crowd is making these days is to think that the nature of war is changing.

War (and this cannot be repeated enough) is and always will be a political endeavor. There is no war without politics. Violence is used to do something concrete. Sure, let’s say you’ve developed your elaborate doctrine on how you can destroy an opponent’s C4ISR structure, now what? What is that doing to get you towards your political goal?

In the case of the Gulf War, our doctrinal approach to warfare was largely in sync with our political objectives. We had a fairly limited goal for the war—evict the Iraqi military from Kuwait—and we were able to rapidly inflict crippling losses to effect that. Saddam, calculating the potential costs of continued fighting, chose to end the war at that point.

The inverse of this of course would be the GWOT conflicts in which the United States military was marred in long-running insurgencies that were able to (somewhat) mitigate our technological advantages through asymmetric tactical innovations. However, the more important part of why we lost these wars though was that our adversaries were simply much more willing to bear the costs associated with taking extremely high levels of casualties.

It was an arational (political) set of decisions that drove the continuance of fighting by our adversaries despite their almost unimaginable battlefield losses. There’s no “rational” way to quantify this aspect of war, but this aspect of war drives all other decisions that are made.

Leaders (and groups) believe in ideals that rise outside the realm of the strictly “rational.” As a general rule, a military can never actually create a plan that would be the most militarily expedient, because what is most militarily expedient depends on what political objectives their civilian leadership has.

This gets me back to what Palmer Lucky has to say about “simulations” and a potential war in the Strait of Taiwan. The shape and form of the fighting are going to be dictated by very real human beings who are going to be making decisions in a crisis.

Does China strike Japan at the outset of fighting? It depends on the political calculus of Xi Jinping and whether he feels like making Japan a party to the conflict and risking greater international involvement outweighs the potential military benefits of striking American assets in theater. The same goes for South Korea and the Philippines.

The American response to a potential invasion is similarly fraught with unknowable choices. Do we fire first knowing we would look like potential aggressors internationally even if it means we would attrit an invasion force? Do we attempt to relieve Taiwan as a show of political resolve despite it putting our Naval forces at risk? Do we expand a strike campaign to the Chinese mainland?

Now of course an LLM could make these choices based on military expediency. It’s nothing more than what’s referred to as a “net assessment.”

It would look something like this: they’ll have fewer assets than us, and we’ll have a strict advantage on paper if you look at the correlation of forces.

It was the same logic that led a lot of people (myself included) to expect the Russian military to prevail through overwhelming force in 2022 during the invasion of Ukraine. This of course wasn’t what happened in reality, and the will to fight of the Ukrainians turned the Russians back at the gates to Kyiv.

This is also the same logic that drove the German general staff in the First World War to advocate for an invasion through neutral Belgium into France at the outset of the war. The political implication of this of course was that Britain was given a legitimized reason for joining the conflict.

All of this is the reason why we have civil control of the military, and general officers incorporate civilian political directives into the creation of war planning. When we engage in conflict, we’re doing so with a discrete political idea in mind about what the world looks like once the guns fall silent (or at least we ought to be).

There are simply too many discrete decisions being made to ever actually account for the choices that need to be made through the course of a conflict to align an AI’s simulation of forces to a political leader’s intent.

In the same way that a small change in the initial discrete conditions of a weather system means a hurricane is hundreds of miles off course from the simulation, an AI cannot align itself with the arational and irrational forces that govern the conduct of war.

I think I’ve said enough about the political nature of war at this point, so I’m going to stop here. For the next part of this, I’m going to spend some time talking about the fog and friction of war, and how AI isn’t going to change that either.